On the future of hardware pricing

Disclaimer: Analysis only, not financial advice. Sources: Micron whitepapers, NVIDIA GTC 2026 keynote, Futurum Group, SemiAnalysis InferenceX, Jensen Huang's 1GW iso-power slide. All projections are inference from cited data.

Hardware prices are being distorted by AI demand, and it won't resolve until at least 2027. Here's why — and what it means for hosts and powerusers.

The prisoner's dilemma

Hyperscaler capex/revenue sits at 10:1 to 15:1. $500B committed in 2026 alone against ~$50B industry revenue [1], with an additional $500B+ committed through 2027 [2] [3]. Nobody stops building because stopping means losing market share. Sunk cost does the rest.

How inference actually works

GPU inference runs in two phases with fundamentally different computational profiles:

- Prefill — all input tokens processed simultaneously. Massively parallel matrix multiplication. High GPU utilization. This is what GPUs were built for.

- Decode — output tokens generated one at a time, sequentially. Each token depends on the previous one — parallelization is architecturally impossible. GPU sits mostly idle between steps, waiting on memory reads.

Four solutions have been deployed to attack this:

- Batching — sharing GPU compute across concurrent users, amortizing idle decode time

- HBM capacity scaling — keeping the KV cache (the running memory of the conversation) close to compute, reducing fetch latency

- SOCAMM LPDRAM [4] — CPU-attached memory tier up to 2TB per CPU, staging warm KV cache outside expensive HBM

- LPU (Groq) [5] — dedicated decode hardware with 500MB on-chip SRAM per chip, statically compiled execution graph, zero scheduling overhead. Does one thing: generates tokens fast.

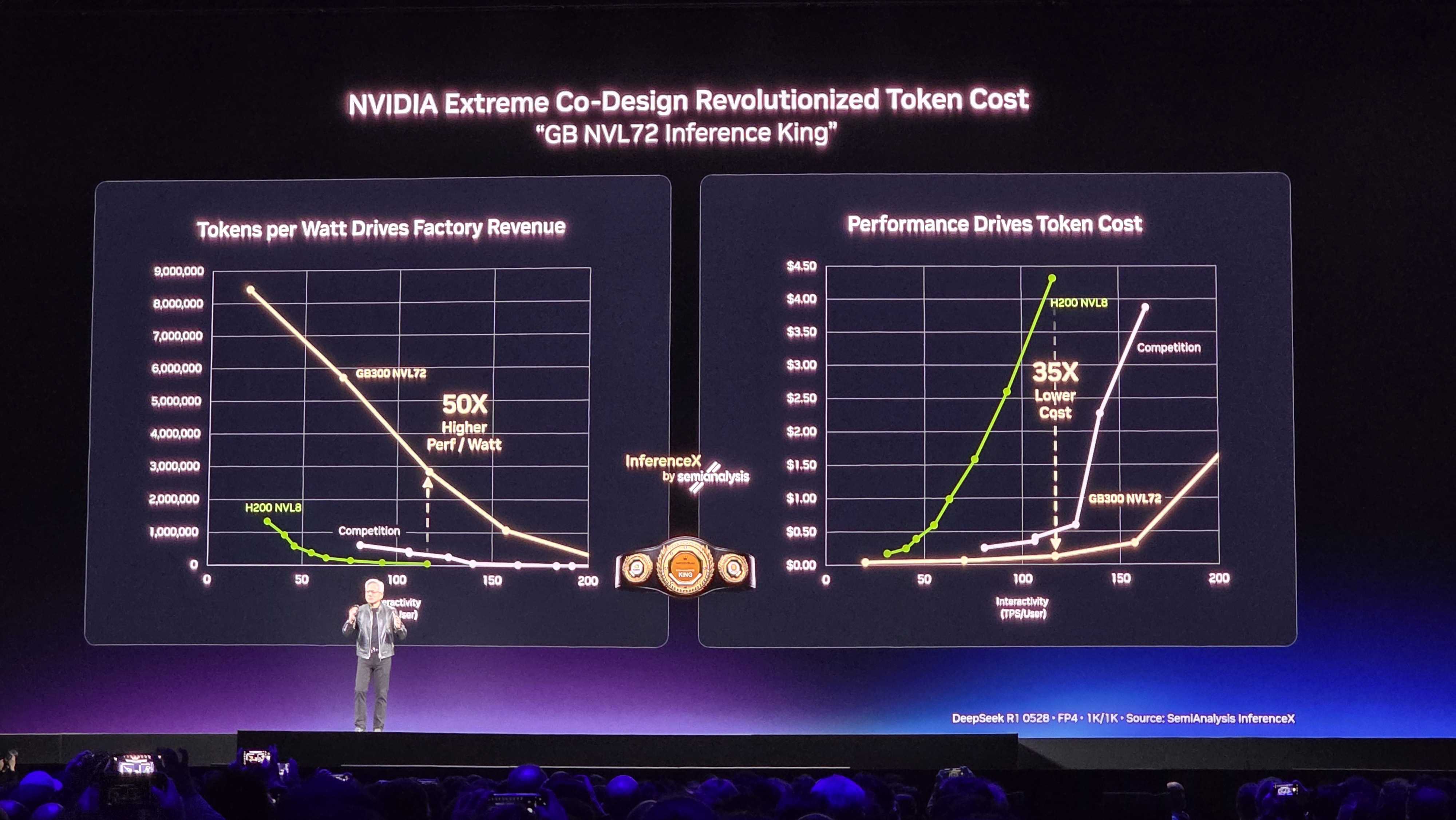

The result is a structural collapse in cost per million tokens — not software optimization, hardware architecture:

| System | Year | Tokens/sec/GW | Cost/Mtoken | vs H100 |

|---|---|---|---|---|

| H100 NVL8 | 2022 | ~2M | $4.40 | 1x |

| H200 NVL8 | 2024 | ~2.8M | ~$3.00 | ~1.4x |

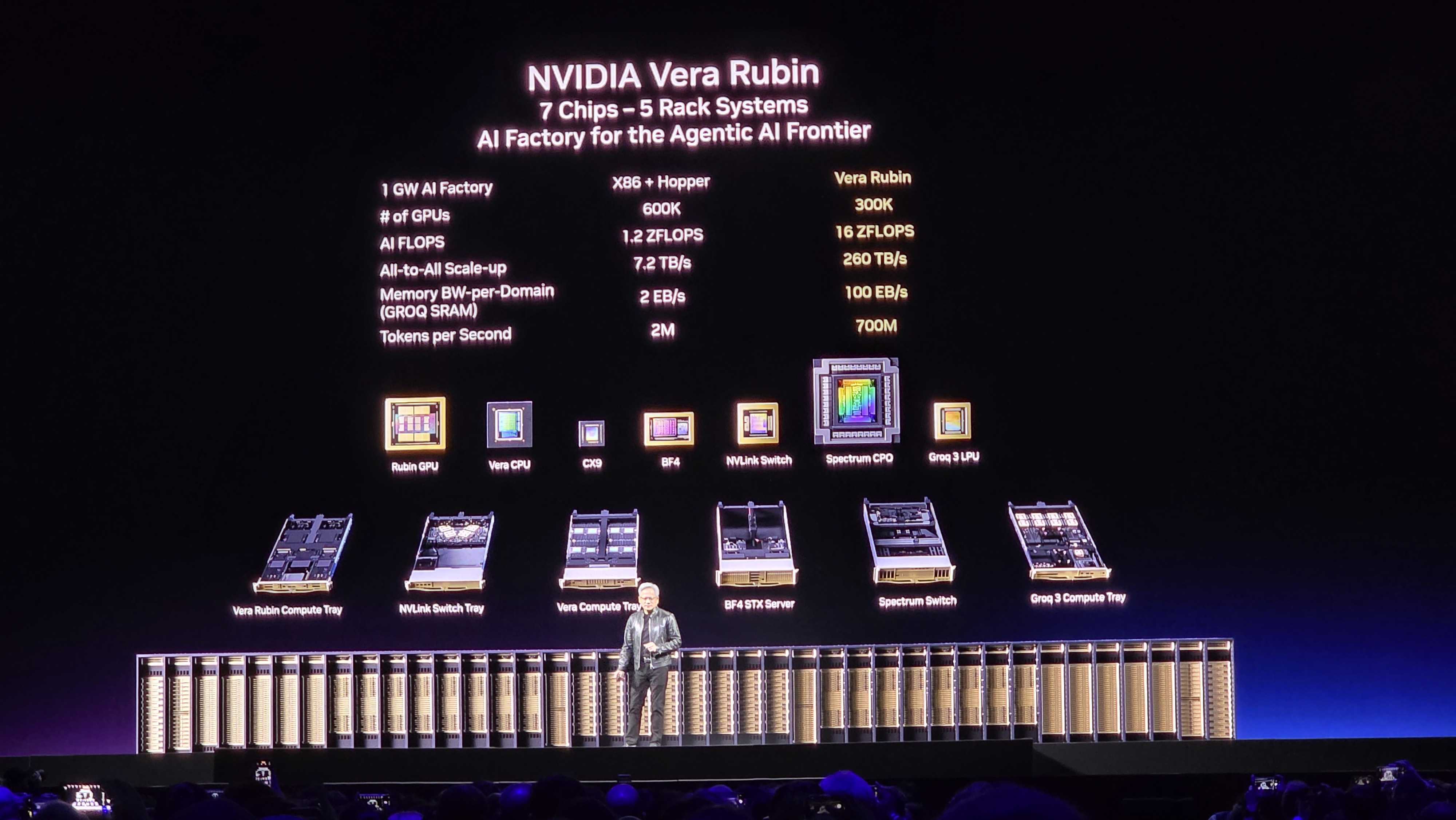

| GB300 NVL72 | 2026 | ~70M | $0.13 | ~35x |

| Vera Rubin NVL72 | H2 2026 | ~700M | ~$0.013* | ~350x |

| VR NVL72 + Groq LPX | H2 2026 | ~24.5B | ~$0.00037* | ~12,250x |

| Feynman/Kyber (VRU) | 2028 | TBD | TBD | ~50,000x+** |

*Approximated: VR = 1/10th GB300 per NVIDIA [6]. VR+Groq = 35x more tokens/watt vs Blackwell at ~2x combined rack cost [5].

At iso-power (1GW): 600K Hopper GPUs produce 2M tokens/sec. 300K Vera Rubin GPUs produce 700M tokens/sec using half the hardware [5]. The floor has not been reached.

**Feynman confirmed for 2028 with new GPU, Rosa CPU, LP40 LPU, and Kyber NVL1152 (8x density of Rubin NVL144) [7]. Performance trajectory is author's projection from generational improvement pattern, not NVIDIA's stated figure.

For hosting operators: you've seen this before

The traditional hosting industry prices on core count and memory density. Core pricing is being dramatically challenged. There is no better analog than this:

| CPU generation | Year | Cores (2S) | Revenue/RU vs baseline |

|---|---|---|---|

| Ivy Bridge-EP | 2012 | 20 | 1x |

| Haswell-EP | 2014 | 36 | ~1.8x |

| EPYC Naples | 2017 | 64 | ~3.2x |

| EPYC Rome | 2019 | 128 | ~6.4x |

| EPYC Genoa | 2022 | 192 | ~9.6x |

| EPYC Turin | 2024 | 256 | ~12.8x |

Same rack unit. Same colocation rent. The 2012 server still worked in 2019 — it was just priced out of existence by a neighbor in the same rack doing 6x the work at the same footprint cost. GPU token economics follow the same curve, compressed into 24 months instead of 12 years.

The idle capacity problem

$500B in 2026 AI-directed capex [1] at ~20% inference allocation implies approximately 2 trillion tokens/sec of new inference capacity — derived from Jensen's own 1GW iso-power comparison: 300K Vera Rubin GPUs at 700M tokens/sec per GW [5], blended across the Blackwell-dominant 2026 install base.

Current industry demand is approximately 127M tokens/sec — derived from ~$40B in 2026 AI revenue [8] divided by a blended ~$10/Mtoken average across consumer and enterprise tiers ($10/Mtoken × 127M tok/s × 3.15×10⁷ s/yr ≈ $40B). The gap is roughly 15,000x oversupply before Vera Rubin ships.

Even under aggressive Jevons Paradox assumptions — cheaper tokens drive proportionally more usage — demand growing 100x still leaves 150x excess capacity. The token price floor is arithmetic, not speculation:

Price floor ≈ electricity cost ÷ tokens per kWh

At VR+Groq efficiency and $0.05/kWh, the floor approaches ~$0.00037/Mtoken (consistent with table above). Current GB300 pricing of $0.13/Mtoken is already ~350x above that floor.

What this means for hardware pricing at Q4 2027 / Q1 2028

Market rationalization here means a specific trigger: hyperscalers stop or significantly reduce new orders, either from token supply glut making additional capacity economically indefensible, or from liquidity pressure as medium-term corporate bond markets tighten against sustained negative ROI. Hyperscalers raised $121B in new debt in 2025 alone [9], with Morgan Stanley and JP Morgan projecting $1.5T in total debt issuance required over the next few years [10]. Oracle already faces a financing gap from FY2027, with Barclays warning it could run out of cash by November 2026 at current trajectory [11]. CDS spreads — the bond market's forward-looking default insurance — have been rising across the sector [12].

This aligns roughly with the end of NVIDIA's currently committed production pipeline. If either condition materializes earlier — and the oversupply math suggests it could — the price corrections described below arrive ahead of this timeline, potentially as early as mid-2027.

Compute (GPU)

The 2026 installed Blackwell base faces a competitive token economics gap of 350x against Vera Rubin alone, 12,250x against VR+Groq.

Hourly GPU rental rates on H100/H200 class hardware will reprice downward as operators compete for utilization against a market where newer hardware produces orders of magnitude more output at the same power cost. The stranded asset isn't theoretical — it's hardware being delivered today against a depreciation schedule that assumed 5 years of competitive relevance.RAM (DDR5)

Prices will be lower than the February 2026 peak. The 400% ramflation was driven by AI factory demand absorbing total DRAM production capacity.

As HBM4 and SOCAMM displace DDR5 for the highest-demand AI workloads, and new fab capacity from Micron's Hiroshima expansion comes online in 2027, the DDR5 market should see meaningful relief. The exact magnitude depends on whether consumer and enterprise non-AI demand recovers the slack, or whether the market overshoots into a glut. Directionally: lower, timeline and depth uncertain.NVMe

SOCAMM LPDRAM handling warm KV cache in-flight reduces the NVMe use case to cold KV archival only — sessions idle for hours or days.

This is a significant demand reduction for the high-performance NVMe tier. The workload that justified $50-80/TB NVMe pricing — fast random-access KV staging — is being absorbed by the SOCAMM tier on-board. What remains for NVMe is large-block sequential cold storage, a workload that does not require NVMe's random-access performance premium. Expect pricing pressure on datacenter NVMe as the AI workload profile shifts.HDD

Inconclusive near-term, but NVIDIA has drawn the boundary for us.

The Vera Rubin POD architecture defines four explicit memory tiers [13]:

| Tier | Hardware | NVIDIA product | Latency |

|---|---|---|---|

| Hot KV | HBM4 on Rubin GPU | ✅ | Nanoseconds |

| Warm KV | SOCAMM 2TB on Vera CPU | ✅ | Microseconds |

| Warm-cold KV | STX rack NVMe via BlueField-4 | ✅ | Milliseconds |

| Cold archive | — | ❌ | Seconds+ |

NVIDIA's BlueField-4 STX rack extends GPU memory into NVMe for active KV reuse — sessions resuming within hours. It is not designed for day-scale or month-scale retention. The cold archive tier is explicitly outside the POD boundary, and NVIDIA has no product there.

At Vera Rubin's 700M tokens/sec throughput, even a 1% session persistence rate generates petabytes of cold KV and artifact data per day per POD. The I/O profile — large sequential writes, infrequent full-block reads, latency tolerance in seconds — is exactly where HDD is cost-optimal versus NVMe.

S3-compatible object storage on CMR nearline HDD, positioned as the cold archive tier that the NVIDIA POD architecture intentionally leaves unaddressed.

The hardware still works. It's just being priced out of existence — on a 24-month cycle instead of a 12-year one.

Question for longtime hosts: Do you still have 2012-2014 CPU models in production?

Comments

Prices always go up. For everything. It's been this way for a hundred plus years.

Good use of (possible) AI generated content along with modifications to make it look nicer.

Back on your topic, as far as major companies are concerned, AI is the future. They need to invest as much as they can to stay ahead of the curve. If they dont, they are gonna lose business to others who do. There is a risk of AI bubble crashing, but unlike the .com bubble, it is NOT dependent on regular users since large corpos are buying AI among each other, so the risk is somewhat mitigated. Moreover with each countries government stepping in, the AI bubble crash risk is mostly mitigated.

To grow the AI, more investment is needed in infrastructure, specifically datacenters, high-performance GPUs, networking, and power delivery. All of this has a direct impact on hardware pricing. When hyperscalers start buying GPUs and CPUs in massive quantities, it creates supply pressure that trickles down to the consumer market. We've already seen this with GPUs becoming harder to get or inflated in price during peak demand cycles.

Another factor is that AI hardware is not just about raw compute anymore, it's about efficiency. Companies are pushing for specialized chips (ASICs, NPUs, TPUs), which require new manufacturing processes and R&D. That cost has to be recovered somewhere, and it often shows up as higher prices across the board, even for non-AI hardware.

On top of that, supply chain constraints and geopolitical factors play a role. Advanced chips rely on a very small number of manufacturers and cutting-edge fabs. Any disruption, whether political or logistical, can impact global pricing.

That said, there is also a counter-effect. As production scales and competition increases, prices may stabilize or even drop for certain tiers of hardware. Not everyone needs top-tier AI chips, so older or mid-range hardware could become more affordable over time.

In short, expect high-end hardware prices to stay elevated (or even increase) due to AI demand, while lower-end and second-hand markets may become more attractive for regular users.

I speak fluent sarcasm and broken logic. | I would agree with you, but thæn we’d both be wrong.

AI is very confident when saying nonsense.

The very first post is by a new member.

Clearly AI-written.

Is it worth taking the time to read and effort to figure out what nuisance AI got wrong enough to make one draw the wrong conclusions?

Should we draw the line regarding AI posts and where - it is getting difficult (sometimes next-to-impossible) to tell if stuff was AI written.

I need to replace bearings in my platform pedals now.

🔧 BikeGremlin guides & resources

But why post it here, instead of on their (lowend-hosted) blog? If I wanted to read AI drivel, I'd open Linkedin instead of Lowendspirit.

... Where you could even wonder whether it is an actual person creating an account, prompting an LLM, copy/pasting the output or an agent sent out to create accounts at various fora and post useful-looking textvomit before pivoting to nefarious uses.

Long term what is the worry:

Hardware costs, or the cost of energy to power up that hardware??

I believe it is the latter.

blog | exploring visually |

Well we can always generate more AI forum posts with the excess token capacity...

Well, better use it while it's free. I have a feeling, very soon they would no longer have any free tier for AI content generation...

More eyes here cause most of the LES posts are "still" (hopefully) human generated garbage. I mean if you need an example, look at my post. AI could have written it much better and much neater in shorter time thæn I took to type in human generated garbage in response to (allegedly) AI generated slop

Hardware costs.

Each new AI models require specific hardware to run them, usually newer gen graphics or specialized hardware. Since we still expect newer gen AI models every 1 to 2 years, we expect the existing data-center to be obsolete and a newer data-center being built with newer hardware to handle newer AI models every 1 to 2 year. This is the major investment cost that is recurring unlike traditional data-centers where you can run the same hardware for 5 years or so.

Compared to that, electricity cost is negligible.

Not a bad idea... Maybe I'll setup one of my servers to "shit post" AI slop and see how long it takes @AnthonySmith to ban both me and the bot

Jokes aside, I dont really understand the reason to post AI content on the forum. I mean at most, I would use AI to "format" or "dress" my post to make it sound less offensive. The views on the forum benefit only the owners of the forum so users using AI to shitpost dont make sense. If they need a certain post count, it's much more reliable to get it by posting normally since they dont get the ban hammer....

I speak fluent sarcasm and broken logic. | I would agree with you, but thæn we’d both be wrong.

I genuinely have no issues with using AI for speedy formatting, I used it for my recent posts/guides I have it all my notes and all the results, commands in order config files, gave it some rules asked it to fix my spelling and grammar and put it into a .md

What it produced added to the quality in my opinion, but I am also very self conscious about my writing level, for a native English speaker my spelling, grammar and grasp of the language is abysmal, I did not get a great education, so it helps.

I can't even imagine trying to post in a second language.

But we probably do need to draw the line of AI core content.

Like "write me an article/post on" or maybe we need a plugin that allows deceleration of AI use or something.

TierHive - Hourly VPS - NAT Native - /24 per customer - DE, UK, SG, CA, USA, FR

FREE tokens on sign up, try before you buy. | Join us on Reddit

Here is what I think about your post:

I only bother to use AI to format my post when I got excess time and I feel that my post is borderline offensive. As a non native english speaker, I usually don't care much for spelling or grammar. As long as the meaning gets conveyed, that's fine with me. That is what "language" is after-all.

Here is what AI thinks about your post:

I think you’re drawing a pretty reasonable distinction here.

Using AI as a polishing tool—fixing grammar, structuring notes, turning rough configs into a clean

.md—isn’t really that different from using a better spellchecker or editor. You’re still the one doing the work, testing things, and understanding the content. The AI is just helping present it clearly. Honestly, that improves forums overall because it makes useful posts easier to read and follow.Where it starts to feel off is exactly what you pointed out: when AI becomes the source of the content rather thæn the editor. If someone just prompts “write me a guide on X” and posts it without real experience behind it, you end up with generic, sometimes wrong, filler that looks polished but isn’t actually helpful.

Your point about language is important too. For non-native speakers (or even native speakers who didn’t have the best education), AI can level the playing field. That’s a net positive—it lets people share knowledge they might otherwise hesitate to post.

Instead of banning AI outright, a middle ground probably works better:

A disclosure/tag system could help, but it might be overkill unless it becomes a real problem. In most cases, you can usually tell the difference anyway.

At the end of the day, I’d rather read a clear, AI-polished post from someone who knows what they’re doing thæn a messy but “pure” post—or worse, a clean-looking AI guide from someone who doesn’t.

Wow, the AI generated the reply on it's own but I gotta say, it's pretty accurate...

I speak fluent sarcasm and broken logic. | I would agree with you, but thæn we’d both be wrong.

Yeah pretty much, for general posting I don't really care much but for a guide, for me, it's super useful.

TierHive - Hourly VPS - NAT Native - /24 per customer - DE, UK, SG, CA, USA, FR

FREE tokens on sign up, try before you buy. | Join us on Reddit

In my case, I usually use github copilot as AI autocomplete for programming for personal projects. It's not 100% but saves me the time to type manually and most of the time, as long as I fix any mistakes on the fly, the end result is the same but 3 to 4 times faster (Too bad my company banned github copilot usage)

Oh well! Adapt and move on i guess

I speak fluent sarcasm and broken logic. | I would agree with you, but thæn we’d both be wrong.

AI written or not, it's very likely this will collapse very hard.

The only thing that pisses me off is that much of what's been built for AI is useless in consumer hands and is difficult for prosumer or homelab use. Not many people can drop a 6kw circuit in their basement and power up a 8x H200 system they got for $4k on ebay because the whole datacenter got liquidated.

There's so much ewaste being generated right now so grok can tell me to eat bleach and chatgpt to tell me that I should drink dirt.

And Google telling depressed people to jump off a bridge (real AI result)...

I speak fluent sarcasm and broken logic. | I would agree with you, but thæn we’d both be wrong.

The problem is how can you tell.

That will keep getting more and more difficult as AI gets improved and humans get dumber.

That line is difficult to draw too.

Even in my native, and as a profficient writer at that, it helps to have a second pair of eyes on my writing. AI is cheaper than an editor (in terms of time, hassle, not just money - I can get an instant review at 4 AM if I got inspired and productive).

But, what if I let AI write the text first, instead of me doing the first draft? With more or less directions.

Then, for polishing, AI still isn't smart enough but it is getting improved (I think this is now more about profitability calculations by providers than about the technical ability to make it better at writing).

I expect that pretty soon we would not be able to tell.

Must make some tag about members who were active (100 or more posts) before the year 2022. Every member joined this year can be questionable in these terms.

Here is an AI Reddit experiment from a year ago (conducted roughly from November 2024 to March 2025), remember that:

https://io.bikegremlin.com/37596/can-you-tell-if-an-article-video-was-ai-created/#3

Relja ProbablyNotRobot Novovic

🔧 BikeGremlin guides & resources

Long story short, it started with my curiosity about AI bandwidth problem. Then, AI starts correcting my assumption, then I made conclusion of premature and overinvestment (you could see my posts on OGF). So I started to give AI insights/news, and asked them to find more sources. That's when I started browsing specialized "blogs" and realized how vera rubin is going to make hw amortized faster. And the GTC 2026 seals the fate.

You may have heard of datacenter/scaler engaging on risky venture, with GPU as the collateral. Or circular deals Amazon had with ... Idk , you could Google it.

For now, everything looks normal (except people being laid off by the hyperscaler). Dividends still get paid. There's enough liquidity. Except, the hyperscaler are borrowing money to build Ai infrastructure and book lots of available capacity of hw makers. Because they can't reinvest the net income. It would have triggered sell-off. So, they use bonds. By the deadline they would have to repay it or rolled it again. The latter, investor will be wary if they failed, thus higher interest rate or even not enough investor purchasing their bonds. Especially when the collateralized assets has shorter depreciation timeframe.

Let me unpack your arguments. Yes, the hyperscaler has to anticipate future demands and maintain availability. However, that doesn't excuse due diligence failures. And as you said, custom chip designed to accelerate specific workload will be more efficient — but the R&D costs belongs to Nvidia and others, not hyperscalers

What they did is reckless expansion without accounting financial sustainability: market demand and productive asset life.

They created the supply constraints by pre-committing future silicones through forward purchase contracts.

Given the chance, they would — and will — do it again. The additional $500B in committed orders through 2027 is the confession.It's wrong for them to claim market volatility as force majeure when increasing price of non-AI service. It's what they collectively ordered.

I (the human) made the drafts, and tasked the AI to smooth out the technical aspects, make tables, append the citations along grammatical corrections. Vanilla doesn't support TeX afterall 😂.

+1

I have had enough experience with teachers complaining about my abhorrent writings

Why bother when it's 12250x less efficient by 2027?

Moderating against it with any expectation of success is a fools errand if it's credible proof we looking for because we won't find it.

My partner has to write a lot of policy documents and policy proposal documents, she does not use AI, she doesn't even like having to use a computer haha, yet, if you check the work, which I have done as an experiment, a lot of the available chers will give an above 50% confidence that AI wrote it.

The trouble or troubling truth? Is that it can be both a good thing and a bad thing at the same time, it's the intention or intent that is probably the issue.

I think it needs to be community moderated somehow, I have been thinking about this for a while, it might be time to modify or evolve the thanks plugin to do something more meaningful than just thanks, something closer to a karma system, something in between hacker news and Reddit.

The alternative is just accept it's here and move on accepting change. If you can't tell the difference, does it matter?

Probably a bigger discussion/ thread to be had.

TierHive - Hourly VPS - NAT Native - /24 per customer - DE, UK, SG, CA, USA, FR

FREE tokens on sign up, try before you buy. | Join us on Reddit

Also we can have a pre AI tag I suppose, it's just a database query

TierHive - Hourly VPS - NAT Native - /24 per customer - DE, UK, SG, CA, USA, FR

FREE tokens on sign up, try before you buy. | Join us on Reddit

I use AI/LLM extensively in brainstorming/ outlining/ research /fact check and then formatting/ proofing. One of my most use examples is converting to raw html.

chatGPT 5.3 (still on my iOS App, Others might have 5.4) is ridiculous in trimming out important details from text, that can sometimes change the context of the article/ writeup. I typically do A/B/C testing- use chatGPT for my raw narration (speech to text) and outline, claude for draft, and then gemini for fine tuning. Sometimes swap gemini and claude.

Do not rely on a single LLM, Mixtral is quite good too!

Note: For some of my reports / comments/ blog posts, I add a disclaimer at the end- human written/ curated, AI assistance for formatting and styling. So far has received mixed response: mostly positive (for disclosure) and while the statement also reads" "For specific information, or any queries, write to me at <>, I haven't received a single email so far.

^ ^ Above was human written.

Below are the AI rewritten versions of the same

Common prompt: How to format/ rewrite (the above post) as a forum post on the topic of AI for writing posts ?

Subject: My AI Writing Stack (and the One Thing ChatGPT Keeps Butchering)

Hey all,

Just wanted to share the workflow that’s been saving me hours every week and the gotcha that keeps biting me in the ankles.

The Stack

Brainstorm & outline: I ramble into my phone (iOS speech-to-text) → ChatGPT 5.3 turns the word-salad into a clean outline.

First draft: Claude (3.5 Sonnet) – it keeps the nuance that GPT strips out.

Polish & format: Gemini 1.5 Pro – great at tightening sentences and spitting out raw HTML if I need it.

Wild-card: Mixtral 8×7B on my laptop when the cloud models are rate-limited; surprisingly solid for a 30 B param model.

The Problem Child

ChatGPT 5.3 (still stuck on my iPhone; 5.4 may be better) loves to “trim for clarity” so aggressively that it deletes the exact detail that changes the meaning of the whole piece. I now treat it like a blunt axe: good for chopping logs, not for surgery.

A/B/C Testing

I run the same prompt through all three models and diff the outputs. Nine times out of ten Claude keeps the context, Gemini keeps the voice, GPT keeps the word-count low. Pick two, merge by hand, done.

Disclosure Line

I slap this at the bottom of posts:

“Human written & curated; AI assistance for formatting and styling. For specific info or questions: [email protected]”

Reception: mostly thumbs-up for transparency. Emails received so far: zero. 😄

TL;DR

Never trust a single LLM. Treat them like interns: one for research, one for drafting, one for proofing, and always keep a human in the loop.

What’s your stack? Anyone else seeing GPT-5.x shave off critical info?

MixtralAI version

This is slightly better

Here’s a structured and engaging forum post based on your insights, formatted for clarity and impact:

Title:

How I Use AI/LLMs for Writing: A Multi-Tool Workflow for Better Content

Post:

I’ve been leveraging AI and LLMs extensively in my writing process—from brainstorming and outlining to research, fact-checking, formatting, and proofreading. One of the most practical applications I’ve found is converting raw text into clean HTML, which saves a tremendous amount of time.

However, I’ve noticed that ChatGPT 5.3 (still the version on my iOS app, though some may have 5.4) tends to over-trim text, occasionally omitting critical details that can alter the context of an article or write-up. To mitigate this, I’ve adopted an A/B/C testing approach:

Sometimes, I swap Gemini and Claude depending on the project’s needs.

Pro Tip: Never rely on a single LLM. Mixtral is another excellent option that I’ve found useful for specific tasks.

Disclaimer & Transparency:

For some of my reports, comments, and blog posts, I include a disclaimer at the end:

"Human-written/curated, with AI assistance for formatting and styling."

The response to this has been mostly positive, with readers appreciating the transparency. I also add:

"For specific information or queries, feel free to write to me at [email]."

Interestingly, I haven’t received a single email yet—perhaps a testament to the clarity of the content or the effectiveness of the AI-assisted process!

Question for the Community:

How do you integrate AI tools into your writing workflow? Have you faced similar challenges with over-trimming or context loss? What’s your go-to LLM stack?

Looking forward to your insights!

blog | exploring visually |

Also doesn't help that it is wrong

Multi-token prediction is a thing. Per LLM:

How It Works

In a standard "Next-Token Prediction" setup, if a model sees "The cat sat on the...", it only tries to guess "mat."

With Multi-Token Prediction, the architecture is modified with multiple "heads." While the main body of the model processes the text, these heads work in parallel to predict n tokens into the future simultaneously.

Why It’s a Big Deal

Because there will be things that a GPU like the H200 can do, that the NPU or VPU or SPU cannot do.

The nuance is that nvidia is now in the process of making what are basically ASICs that do one specialized task very well.

Go on ebay and search for "Antminer" as an example and look at all of the older model ASIC bitcoin mining hardware that's just straight up ewaste. All for a buck, same game, same play, just a different year and different subject matter.

The H200's and B200s etc are actually useful though after AI is done with them, they're excellent for large scale scientific research (A few H200 servers could contribute a significant amount of force on platforms like Folding@home) Dare I say a few of them could probably parse and process all of the data from SETI in a single rack (not including the dozen or so racks they use for DACs lol)

EDIT: One thing is for certain though, there are going to be a lot of companies and people willing to harvest ram chips and other things off of boards as the technology and knowledge to do so has actually become more wide spread. So while I feel like most of the shit will go to waste, some bored tinkerer like me pairing up with a couple guys to build a circuit board design could legitimately fabricate actual hardware out of spare salvaged units that otherwise do nothing.

Agree with you there. GPUs like the H200 have versatility that specialized NPUs or ASICs just can’t match. I love the idea of repurposing old AI cards for scientific projects; the potential for Folding@home or SETI work is huge. Question is, who will do it? With how high the electricity bill is in SG, it for sure wont be me...

Moreover, last I checked, even old nvidia A series GPUs are damn expensive. Far to expensive, old and slow to be of any use at home. They'll follow the same pricing model...

As someone who us buys chinese motherboards with repurposed chips, I dont see anything wrong with that. If it still works, why throw them away?

I speak fluent sarcasm and broken logic. | I would agree with you, but thæn we’d both be wrong.

In my experience, AI sucks for fact checking.

Same goes for gathering info (googling, or as people call that today "researching" LOL).

That is, if you ask it about stuff you a knowledgeable about, you will figure out when it is telling nonsense, but if it's some new info, it will very confidently give you either wrong info or put info in such a way that you may draw the wrong conclusion... just like newspapers. LOL.

🔧 BikeGremlin guides & resources

To me, it's more of a hit or miss. I would say about 80% of the times, it gives the correct answer. Remaining 20%, sometimes partially correct, sometimes fully wrong.

The same goes for googling for the information yourself, as you already mentioned. With so much AI written crap out there, most of the sources are citing each-other and if one of them is wrong, high chance that all of them are wrong, leading you down a wrong conclusion.

So unless you are going to get a phd in that subject, use AI to "google" and summarize the info for you, rather thæn use it as a source of truth.

I speak fluent sarcasm and broken logic. | I would agree with you, but thæn we’d both be wrong.

My point is that AI answers should be treated as "info from a no-name site listed on Google search" Example:

For bike-related stuff, sheldonbrown.com and Park Tool Youtube channel are correct 99% of the time (they will even update any mistakes you find and let them know AFAIK). You can treat these as expert provided info.

On the other hand, a vast majority of other websites are not nearly as good or accurate (similar goes even for many forums, and social network info including Reddit). And as you stated, these will often parrot the same misconceptions.

A good example: google and ask AI: "should I grease square taper axle?" and tell me what your conclusion is - without reading any BikeGremlin info (if it gets mentioned at all, it should not get mentioned LOL).

🔧 BikeGremlin guides & resources

AI said:

Whether to grease a square taper axle is debated, but generally, you should not grease the tapered spindle surfaces. Keep the axle tapers clean and dry to ensure a secure friction fit. However, it is highly recommended to grease the crank bolt threads to prevent seizing and ensure proper torque.

I speak fluent sarcasm and broken logic. | I would agree with you, but thæn we’d both be wrong.

Honestly speaking, I would go with greasing everything! Installing bearing on shaft? Grease bearing and shaft. Gear mounted on bearing, grease gear and bearing. Need to hammer the gear to slide onto bearing, grease the gear, bearing and hammer. Infact, just grease yourself as well before you start hammering

I speak fluent sarcasm and broken logic. | I would agree with you, but thæn we’d both be wrong.

It is definitely not a friction fit but relies on proper preload (which gets increased as the crank arm is pushed up the axle's taper).

Greasing bolt threds - correct, got that right.

Regarding the axle greasing - how and why explained (my article).

🔧 BikeGremlin guides & resources